Pick and place for

textured objects

Reliable, cost effective gripping for textured

or labeled objects ideal for unstructured environments

Learn more

Intelligent gripping system

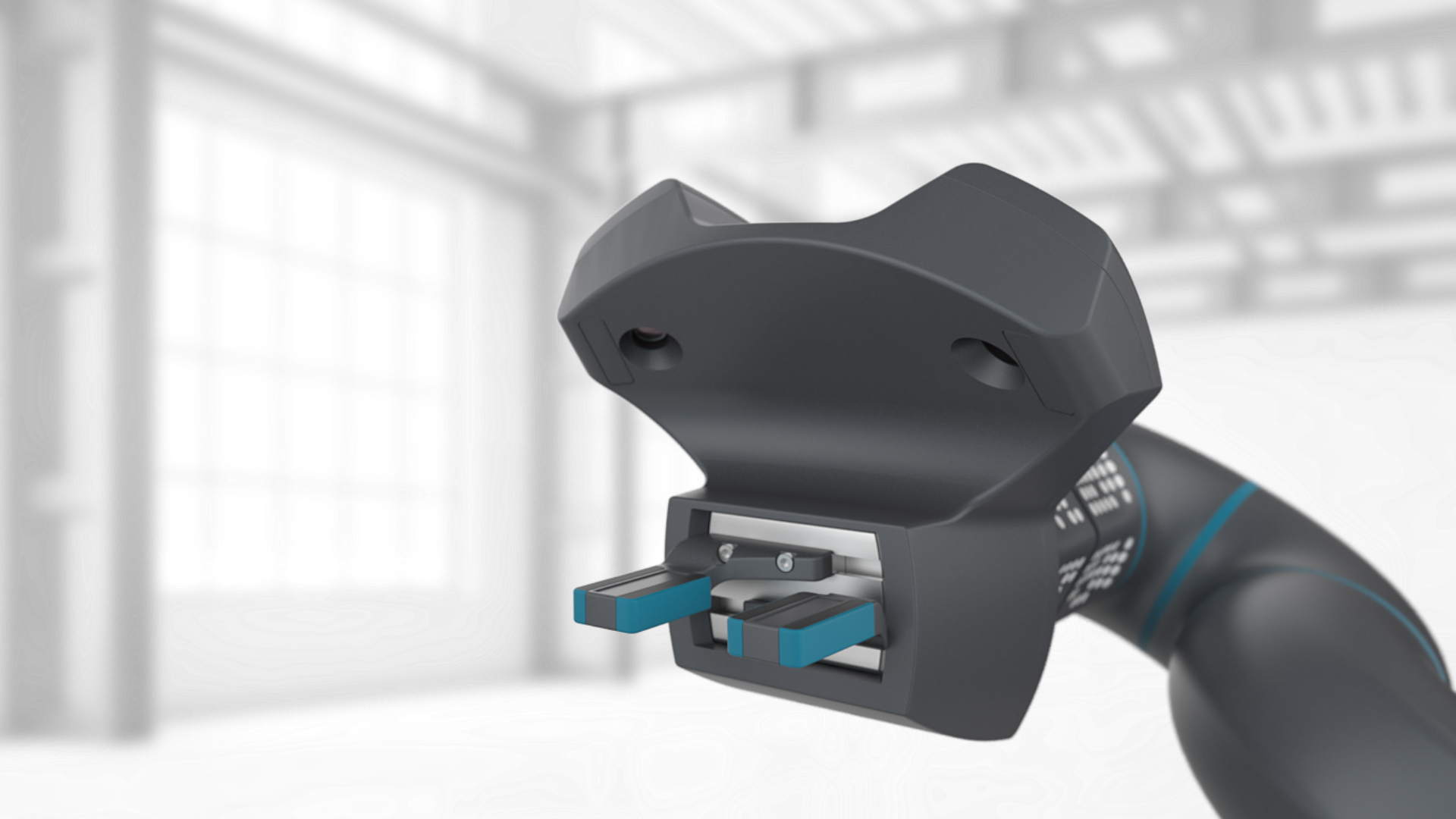

A gripping system providing the sense of touch

Today’s solutions for grasping are quite inflexible – fingers are specially adapted to a single object, and sensory feedback from the grasping process is very limited. Our intelligent gripping system is a ready-to-use solution for flexible and sensitive grasping applications. It integrates an industrial gripper, a stereo vision system, as well as our camera-based sensors for tactile data and optionally force/torque. The rubber foam on the finger ensures a secure grasp of various objects. At the same time, it serves as the sensitive element for our camera-based tactile sensors. The integrated sensing software provides rich feedback about the grasping processes. The control features enable grasping of a wide variety of objects with a single gripper/finger, and also enable handling of fragile parts without time-consuming reprogramming.

Highlights

Versatile sensitive grasping

Stereo vision system

Fully integrated

Rich sensor data

Object recognition (optional)

Quality control (optional)

Our technical solution

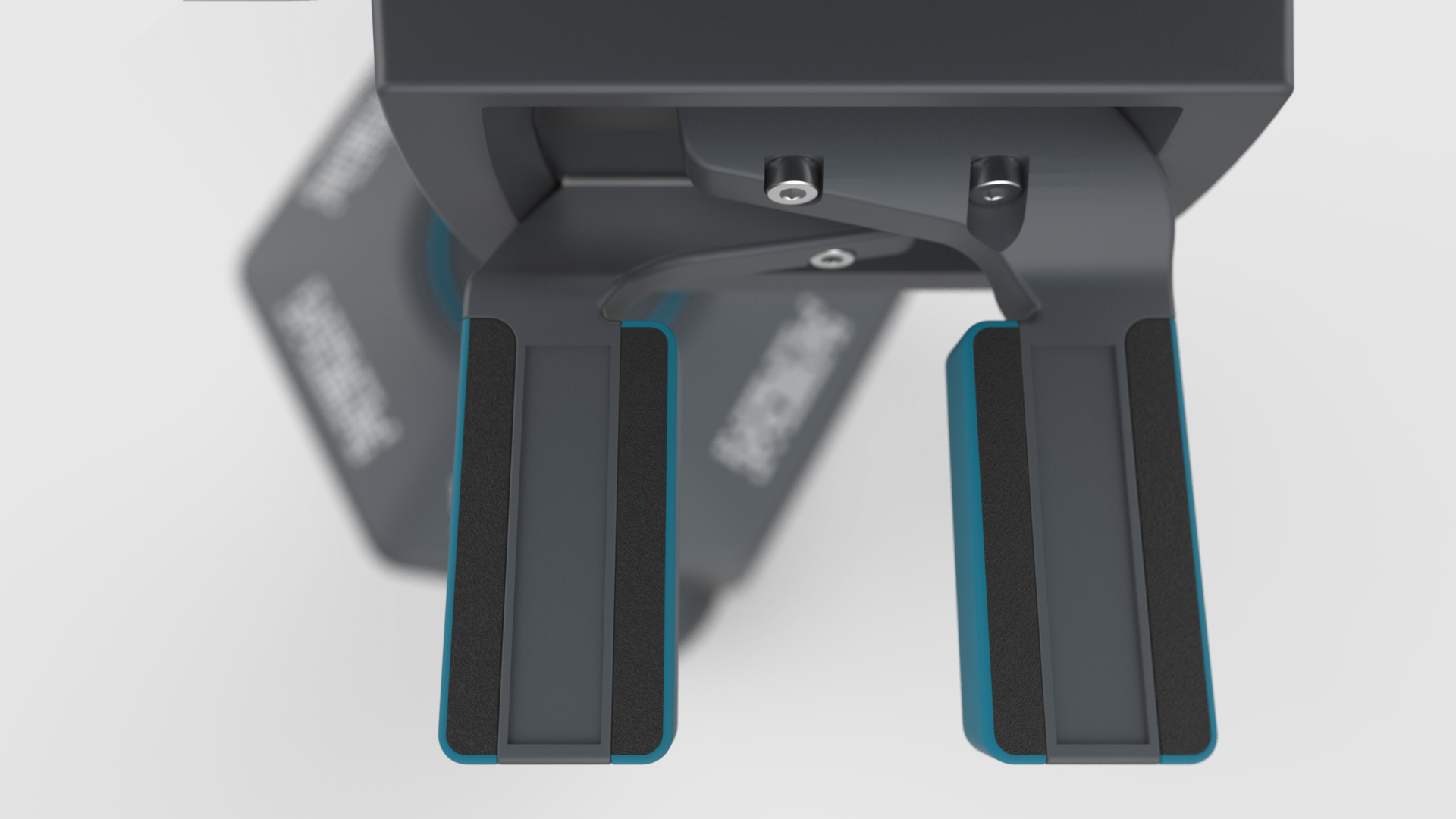

Our intelligent gripping solution integrates two grayscale/color cameras, an industrial 2-jaw gripper, as well as universal fingers in a compact package with a standard mount.

The fingers are equipped with a layer of rubber foam to support camera-based tactile sensing.

The core of our sensing technology is an image processing software, which derives physical sensor signals from the observation of the rubber foam's deformation during contact. Hence, the sensor functionality is shifted from complex and dedicated hardware modules into our intelligent sensor software. This sensor software provides rich feedback about the grasping process, including tactile/pressure profile, grasping force, gripper opening, object position/count, object shape, object deformation and detailed grasping statistics. Using this data, our multi-goal grasp controller enables intelligent and flexible grasping of varying objects.

Our patented sensor principle allows you to simply replace or adapt the gripper's fingers. This is due to the fact that no wiring is needed in the fingers, since the rubber foam is completely passive and without any integrated electronics.

At the same time, the cameras can be used for various other tasks like for example object recognition and localization. Please get in contact with us to discuss your computer vision requirements.

Your benefits

Save costs

Simple usage

Reduced integration time

No feeding systems

Fewer gripper variants

Holistic data generation

Technical data*

| Robotic gripper | |

|---|---|

| Gripper module | Gimatic 2-jaw electric parallel gripper |

| Opening | 2x 15 mm, controllable |

| Grasping force | Max. 63 N, controllable |

| Fingers | Length: 60 mm, soft surface, straight shape |

| Adaptation | Length/shape of fingers, custom fingers |

| Grasping modes | Internal/external grasping |

| Visual markers | 1 per finger |

| Sensitive element | EPDM (rubber foam), see tactile sensor |

| Flange | 4x M6, Ø 50mm (ISO 9409-1), e.g. for UR3/5 |

| Connections | Power 24V 1A; USB3 (cameras, gripper control) |

| Cameras | |

| Resolution | 2x 1280x960, 45fps (up to 80fps) |

| Sensor | 1/3”, global shutter, color/mono |

| Interface | USB3, UVC standard |

| Distance from fingers | ca. 150 mm |

| Position | 2 cameras integrated in stereo camera attachment |

| Field of vision | Fingers, grasped object, environment beyond fingers |

| RoVi software for sensing and control | |

| Sensing (camera-based) |

Tactile profile (pressure profile) Grasping force Object detection / position / count Object deformation Gripper opening Grasp statistics |

| Grasp control | Multi-goal: Position, current, force, object deformation |

| Integration | ROS (Industrial) |

| System requirements | Ubuntu Linux, Intel i5 2Ghz, 8GB RAM |

| Plug & Play | Ready-to-use USB stick |

| Scripting | Simplified Python scripting. Integrated API for grasping, motion commands, sensing, object detection, camera access. |

* The technical data describes the current prototype and is only preliminary.

Other products

Camera-based tactile sensor

The sense of touch for robot grippers! Our solution relies on a passive sensitive element and provides tactile data from image processing.

VisePick Low Cost Pick and Place

Low-cost robot arm with camera-based joint position sensors for small-scale automation applications with integrated vision

ViseGrip Intelligent Gripping Solution

Gripping system for sensitive handling and flexible grasping with integrated cameras and camera-based tactile sensors on the fingers

ViseGrip for E-Commerce

Reliable, cost effective gripping for textured or labeled objects ideal for unstructured environment and easy trained without any CAD data.